Why AI-Drafted Contracts May Be Making Agreements Worse

The promise sounded compelling: AI tools that could draft, review, and optimize contracts in seconds, eliminating weeks of attorney time and thousands in legal fees. Companies raced to adopt AI contract review platforms, convinced that automation would solve the perennial problem of contract bottlenecks. Three years into this experiment, a troubling pattern is emerging—many AI-drafted and AI-reviewed contracts are creating more problems than they solve.

The issues aren't what critics predicted. AI tools rarely make obvious errors like inserting the wrong party names or garbling standard clauses. The problems are subtler and more insidious: contracts that are technically correct but practically unworkable, provisions that pass automated checks but create relationship friction, terms that score well on AI metrics but fail to address actual business needs.

This degradation in contract quality isn't immediately visible. AI-generated contracts look professional. They include all the expected sections. They use proper legal terminology. But they often lack the judgment that experienced attorneys bring to contract drafting—understanding which provisions matter for this specific relationship, recognizing when standard language won't work for this particular deal, anticipating where terms might create future disputes.

The proliferation of contract review AI tools compounds this problem. Different tools evaluate contracts using different criteria, produce different scores for the same provisions, and generate conflicting recommendations. Companies using multiple tools to cross-check each other end up more confused than if they'd used none.

The Subtle Ways AI Is Breaking Contracts

AI contract review systems optimize for the wrong things. They evaluate provisions based on favorable scoring relative to training data—whether clauses are more customer-favorable or vendor-favorable compared to thousands of analyzed agreements. This comparative analysis seems objective, but misses the context that determines whether specific terms work for specific relationships.

When "Optimized" Actually Means "Worse"

Consider a liability cap. AI tools can instantly tell you whether a proposed cap is above or below market standards, whether it's vendor-favorable or customer-favorable relative to benchmarks. What they can't tell you is whether the cap is appropriate given this vendor's actual risk exposure, this customer's specific concerns, and this relationship's unique dynamics.

The "optimal" term, according to AI scoring, might be exactly wrong for this situation. This optimization without context leads to homogenization, where contracts become increasingly similar regardless of whether similarity serves the parties.

What AI optimization gets wrong:

- Pushes all contracts toward statistical norms regardless of business context

- Flags necessary deviations from standards as "problematic"

- Scores terms based on training data patterns, not relationship needs

- Creates pressure to conform to AI recommendations that miss the point

The result is contracts that score well on AI metrics but fit poorly to actual business needs. Parties end up with "optimized" terms that reflect what most agreements say rather than what this agreement should say.

The Dangerous Things AI Doesn't See

Contract review AI excels at finding what it's been trained to look for: standard clauses, common provisions, frequent patterns. It struggles with what's absent—provisions that should be present but aren't, commitments that need specificity but remain vague, risks that require address but go unmentioned.

Human attorneys reviewing contracts don't just evaluate what's written; they notice what's missing. They recognize when a contract for AI services doesn't address training data usage, when a multi-year agreement lacks change management provisions, when international transactions are silent on jurisdiction and governing law.

Critical gaps AI contract review typically misses:

- Provisions that should exist but don't appear in the document

- Context-specific clauses required for this particular relationship

- Industry-specific protections relevant to the parties involved

- Future scenarios that generic analysis wouldn't anticipate

This creates dangerous blind spots. Parties might receive an AI analysis declaring a contract "favorable" or "low risk" when that analysis simply didn't consider categories of provisions relevant to their specific situation. The confidence that AI review provides becomes false confidence when it's based on an incomplete evaluation.

When Every AI Tool Says Something Different

The market now includes dozens of AI contract review platforms, each using proprietary algorithms and different training data. One platform flags a provision as concerning; another rates it as favorable. This leaves companies in the awkward position of deciding which AI to trust, a judgment call that requires exactly the expertise the AI was supposed to replace.

The conflicting guidance reflects underlying differences in how these systems were trained and what they optimize for. Tools trained predominantly on vendor contracts naturally favor vendor-friendly terms. None of these approaches is objectively correct—they reflect different priorities that the AI bakes into its recommendations.

-Mar-02-2026-01-39-42-7254-PM.webp?width=654&height=276&name=image2%20(1)-Mar-02-2026-01-39-42-7254-PM.webp)

What AI Can Never Replace

Experienced contract attorneys bring judgment that goes beyond evaluating whether specific clauses are favorable or risky. They understand the business context that makes certain provisions necessary or problematic for this particular deal.

The Context That Algorithms Miss

They recognize when standard terms won't work because this relationship has non-standard characteristics. They anticipate future scenarios that generic AI analysis wouldn't consider. This judgment involves weighing competing priorities that can't be reduced to scoring algorithms.

Sometimes accepting a less favorable liability cap is worth it to secure better payment terms. Sometimes including provisions that AI flags as risky is appropriate given the strategic importance of the relationship. Sometimes, deviating from market standards makes sense because this deal isn't standard.

What human judgment considers that AI doesn't:

- Strategic importance of this specific vendor or customer relationship

- Company's risk tolerance for this particular contract type

- Industry-specific requirements that generic tools don't recognize

- Trade-offs between competing priorities (speed vs. favorable terms, relationship vs. protection)

- Long-term implications beyond what contract scoring measures

AI contract review systems can't make these judgment calls because they lack the business context that informs them. The tools don't know whether this vendor is the only provider of needed capabilities, whether this customer represents entry into a strategic market, or whether this relationship is transactional or a long-term partnership.

How Certify Fixes What AI Breaks

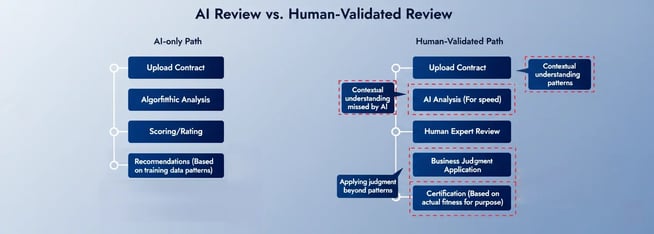

The solution isn't abandoning AI but combining it with human validation. TermScout's Certify platform addresses AI contract review limitations through a hybrid approach where AI handles pattern recognition and comparison while human legal experts provide judgment and context.

The platform uses AI to analyze contracts quickly, identifying provisions that deviate from standards, comparing terms to thousands of benchmarked agreements, and flagging potential issues. But this automated analysis gets validated by attorneys who evaluate whether flagged provisions are actually problematic in context.

What human validation catches:

- Provisions that score well algorithmically but don't work practically

- Missing elements that matter for this specific contract type

- Contextual appropriateness beyond favorable/unfavorable scoring

- Whether AI-recommended "optimizations" actually improve the contract

This validation catches the problems AI creates: provisions that score well but don't work practically, optimizations that serve metrics rather than parties, and conflicting guidance that confuses rather than clarifies. Contract analytics software works best when overseen by experts.

Why Skepticism About AI Tools Is Healthy

The current AI contract review boom requires healthy skepticism about what these tools can and can't deliver. They're valuable for initial analysis, pattern identification, and comparison to benchmarks. They're poor substitutes for judgment about contextual appropriateness, completeness relative to business needs, and whether terms will actually work for the parties involved.

-Mar-02-2026-01-47-56-3243-PM.webp?width=654&height=279&name=image3%20(1)-Mar-02-2026-01-47-56-3243-PM.webp)

Using AI Right vs. Using It Wrong

Companies should approach AI for contract review as an augmentation of human expertise rather than a replacement for it. The tools accelerate certain tasks—extracting key terms, comparing provisions to market standards, identifying common risk patterns.

But they don't eliminate the need for attorneys who understand the business, recognize context-specific requirements, and apply judgment to decide whether AI-recommended "optimizations" actually improve the contract.

Appropriate uses of AI contract review:

- Initial scanning for standard provisions and common patterns

- Rapid comparison of terms against market benchmarks

- Extraction of key data points from lengthy documents

- First-pass identification of obvious red flags

Where AI fails without human oversight:

- Determining if "favorable" terms actually fit the business relationship

- Identifying critical missing provisions specific to the situation

- Resolving conflicting guidance from multiple AI tools

- Weighing trade-offs that require business context

This skepticism extends to over-reliance on automated scoring and rating. When AI rates a contract as "favorable" or assigns high scores to provisions, these metrics reflect the algorithm's training and optimization targets, not necessarily the contract's quality for the specific situation.

The Certification That Actually Means Something

The distinction between scoring contracts and certifying them becomes critical. AI platforms excel at scoring, but contract certification requires different analysis. It evaluates whether contracts meet quality standards beyond favorable scoring—whether they address the issues that matter for this contract type and create workable frameworks for the relationship.

TermScout's Certify platform provides this certification by combining AI analysis with expert legal review.

|

Evaluation Type |

What It Measures |

What It Misses |

Value for Decision-Making |

|

AI Scoring Only |

How terms compare to training data patterns |

Context, completeness, practical workability |

Limited - may mislead |

|

Pure Human Review |

Context and judgment |

Speed, systematic benchmark comparison |

High but slow/expensive |

|

Certified Review (AI + Human) |

Patterns + context + fitness for purpose |

Nothing - combines both strengths |

Highest - fast and reliable |

This certified validation serves as quality assurance that pure AI contract review can't provide. Companies know they've been evaluated by humans specifically checking for unfair contracts.

Getting AI Right in Contract Review

The AI contract review boom has delivered genuine benefits—faster initial analysis, systematic comparison to market standards, and democratized access to contract intelligence. However, it also creates problems through inappropriate optimization.

The path forward involves appropriate skepticism about what AI tools can deliver and recognition that human validation remains necessary for contract quality. Contract review AI works best as an augmentation that accelerates certain tasks while humans provide the judgment these systems can't replicate.

Companies that understand this distinction use AI appropriately—for speed and pattern recognition, not as attorney replacement. Those that treat it as a complete solution end up with contracts that are technically correct but practically problematic.

TermScout's Certify addresses the limitations that pure AI contract review creates by combining automated analysis with human legal validation focused specifically on what algorithms miss. This hybrid approach delivers both AI efficiency and human judgment, producing certified contracts that are not just favorably scored but actually fit for purpose.

Discover how Certify combines AI efficiency with human validation to avoid the pitfalls of pure AI contract review.

Share this

You May Also Like

These Related Stories

-Mar-02-2026-01-28-20-0687-PM.webp)

AI Badges Aren't About Compliance—They're About Defensibility

.jpg)

What Makes a Contract Legally Binding (and When an Agreement Becomes Enforceable)

-3.webp)

.png?width=130&height=53&name=Vector%20(21).png)